The Green Screen Legacy

Legacy mainframe applications are now being migrated to the cloud in droves. This got me thinking, haven’t we been here before? The evolution of application architectures from mainframes to client-server to cloud computing is fascinating when you take a step back and think about it.

Legacy mainframe applications are now being migrated to the cloud in droves. This got me thinking, haven’t we been here before? The evolution of application architectures from mainframes to client-server to cloud computing is fascinating when you take a step back and think about it.

Remember “green screen” mainframe terminals? These systems such as the IBM 3270 series (pictured here) were used for accessing and running applications that were hosted on a centralized mainframe. At that time, mainframe computing was widely used by large enterprises to run many business-critical applications. The vast majority of the processing of the application occurred on the mainframe (or “host” as it was sometimes called) while the green screen terminal was simply used to view data from and enter data into the mainframe application. Since these terminals were not performing any material application processing, they were sometimes referred to as “dumb terminals”.

The Client-Server Era

In the 1980s and 1990s, desktop computers with more powerful CPUs spread widely as the popularity of DOS and Microsoft Windows grew. This brought with it a new trend in application development called the “client-server model” where applications became much more distributed now that users had access to more processing power on their desktops. This more modern approach to application architecture relied on the local desktop computer performing more of the application processing, which was necessary for applications that adopted graphical user interfaces (GUIs). In fact, back when I was still a developer in the early 1990s, many of the applications I built were executed 100% on the desktop and did not communicate with a centralized server at all.

However, the mainframe was already so embedded in large enterprises, it was clear that it was here to stay, even with the proliferation of client-server application development. In the late 1990s, I worked for a company called Attachmate that built its business on selling mainframe terminal emulation software for Windows and other desktop environments. At the time, Attachmate was the largest privately held software company in the world largely due to the importance of the mainframe. The problem they solved was how to avoid having both a mainframe terminal and a desktop computer sitting on every desk in a large enterprise. Their software product allowed users to access the mainframe from their desktop computers by emulating a mainframe terminal as a desktop application, enabling companies to eliminate an expensive piece of hardware on almost every desk. The mainframe lived on!

Wait, isn’t this where we started?

Fast forward to 2020 and now we see all types of legacy applications moving up to the cloud, both mainframe and client-server. Many of these applications now use a web-based presentation layer and the web browser has become the modern-day green screen terminal for accessing enterprise applications on the cloud. The “host” has transformed from a centralized mainframe computer sitting in an enterprise’s data center to a massively distributed cluster of servers connected to the internet across the globe – the Cloud. Most of the processing of these applications is now happening up in the cloud or back on the “host” just like the old days. It’s déjà vu all over again!

The questions many large enterprises with enormous investments sunk into their mainframe applications are asking include:

- How do we continue to leverage the decades of intellectual property and critical business processes that are embedded into these mainframe applications while also digitally transforming?

- How do we get our legacy applications to the cloud quicker so we can optimize costs and start leveraging more modern software capabilities?

Recently, I started researching exactly how organizations are migrating mainframe applications to the cloud, especially the ones written in the COBOL programming language. There are several options for accomplishing this and deploying these legacy COBOL applications in a somewhat “cloud native” state to take advantage of the promise of cloud computing. Cloud native services such as auto-scaling, database as a service, and machine learning could be extremely powerful ways to integrate modern capabilities with legacy applications. However, these COBOL applications will still need to be refactored to take advantage of cloud native services. For example, teams may have to replace the legacy mainframe-based database technology with a cloud-based RDBMS. Identifying how exactly these applications need to be refactored for the cloud can be a time-consuming process. Furthermore, the skillsets necessary to accurately assess these legacy COBOL applications are getting harder to find. This is where Software Intelligence from CAST comes into play.

Software Intelligence for Faster Migration of COBOL to Cloud

Automating the assessment process for a legacy application saves significant time and ensures more accuracy when planning a cloud migration. In a recent presentation, Microsoft Senior Solution Architect Jeremy Woo-Sam explains how his team of Azure blackbelts has taken their application assessment time from 3 weeks using a manual method down to 3 days using CAST Highlight with the same level of accuracy.

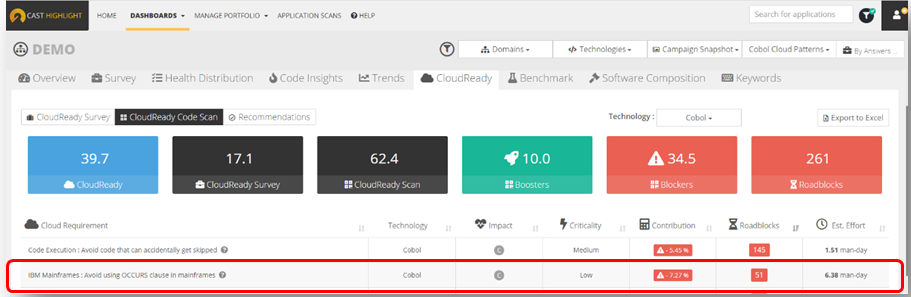

In the most recent release of CAST Highlight, we introduced 25 new Cloud Maturity code patterns specifically for COBOL applications. CAST Highlight analyzes COBOL application source code and uses these patterns to automatically calculate a Cloud Maturity score for the application while also identifying exactly what needs to be refactored and where these patterns occur in the code. For example, one new COBOL Cloud Maturity pattern includes: Avoid using the OCCURS clause in a COBOL application. This is a common practice in a COBOL application that manipulates stored data, however it is not supported in modern day RDBMs and will need to be refactored if the application is going to adopt a cloud-based database.

This screen shot from CAST Highlight above shows how the “Avoid using OCCURS clause” Blocker pattern was detected in this COBOL application along with the number of occurrences and estimated effort to remediate.

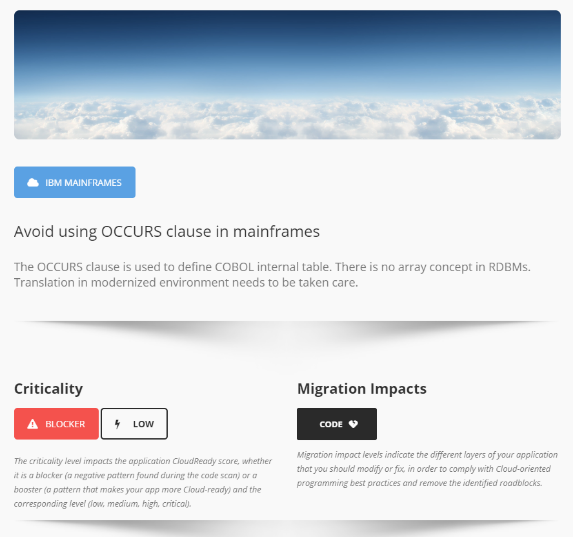

To the right is a screen shot from the CAST Highlight knowledgebase that provides more detail on this Blocker pattern.

We all know time is money. So, at this time when most enterprises are focused on cost optimization, CAST Highlight helps reduce the time and cost of assessing legacy COBOL applications for cloud migration using automation. And it uses a fact-based approach to ensure a more accurate assessment thereby getting legacy applications to the cloud safer, further accelerating the time to start reaping the cost benefits of the cloud. Ultimately, mainframe applications are not going anywhere soon – except maybe up to the cloud.

SHARE