In my previous post, we looked at the drivers making CIOs increase investment in IT modernization, and the eight steps that are particularly challenging in legacy modernization projects. Let’s now have a look at why rewriting applications to modernize is highly risky and often not a cost-efficient approach.

Rewriting Applications – A High-Risk Approach to IT Modernization

CAST regularly helps clients analyze legacy applications to understand the current state, the causes of important shortcomings and determine the optimal modernization approach. We’ve seen many examples where a rewrite was initially assumed to be the way forward but then abandoned once a deeper analysis uncovered cheaper and less risky incremental approaches.

So, rewriting an application is rarely the as inescapable a solution as it appears at first. Fortunately, because not only is rewriting an application costly, but it is also subject to several additional specific risks compared to traditional new development. Let us look at some of the risks which are easily overlooked:

- Scope-creep. Rewriting an application is easily seen as an open door to add more and more requirements. For example, to avoid disappointing stakeholders who expect their past problems or long overdue needs to all be solved by the new shiny application. Rewriting projects also often fall behind schedule, and it is tempting to compensate the delays by adding features to appease the business or users. In addition to functional scope creep, there will also be a strong temptation to introduce other long due changes for example to tools, practices or technology since the occasion is seen as “now or never.”

- Implicit requirements. With legacy applications, it is rare that documented requirements have kept up with reality. Requirement practices may have changed over time, improvements may have been managed informally, non-functional requirements may have been tested but never written down, resourceful developers have anticipated needs, or features may have sneaked in disguised as bugs. Important non-functional requirements, for example performance or response time, that the legacy application got right from the start may never have been recognized and captured and may only be discovered after deployment. The lack of adequate requirements is often difficult to detect, or even admit, making it easy for the problem to go unnoticed. Finally, “retro-documenting” years of work is both time-consuming and tedious, making it easy for everyone to quickly agree that simply it isn’t worthwhile. But missed requirements always surface sooner or later, often towards the end of a project, or, worse, after rollout, leading to delays or unhappy users.

- Unrealistic expectations from stakeholders and end-users. The cost of rewriting is high, so the expectations are likely to be equally high, and each stakeholder will expect to finally get the improvements that they legacy application was never able to provide.

- Low tolerance for regressions or missing features. A wholly new application is expected to have rough edges, but when an existing application is being replaced, it is assumed that not only should it do everything the old system did, but also do it better.

- Balance legacy features and new features. The two previous points add up to a real challenge when rewriting an application. A new application which provides new features but misses old ones may not be able to replace the legacy application. A new application (or at least its first release) which only provides the same features as the legacy will have difficulty demonstrating its value. And attempting a big-bang approach covering all legacy features as well as all improvements is ambitious and extremely difficult to succeed. Finally, attempting a co-existence between the legacy application and the new one will not only be complicated for users, but also add more requirements to the rewriting project.

- Change overload. Rewriting an application is likely to introduce many changes at once. New architecture, new frameworks, new components, new programming languages and tools, new development practices, new environments like the cloud, … Even if the functional expectations are known, a team will require significant time to establish the new foundation of working practices needed to make them fully efficient.

- Challenging staffing. When rewriting an application, it can be near-impossible to set an effective team. The existing team may resist new technologies or not use them well, a new team is unlikely to have sufficient knowledge of the domain and the implicit requirements and will often have to learn by costly trial and error (and depending on the context, the old team may not be motivated to help them get it right). If the rewriting effort is outsourced this will obviously accentuate such challenges.

- Unrealistic expectations to existing tests. As mentioned in the previous post (link), the need for validation should not be under-estimated. However, when rewriting an application, the probability that existing test suites can be reused is very low since all types of tests are likely to be impacted in one way or another.

- Underestimating the roll out.Whenever legacy applications are changed, it is easy to assume that little or no training will be needed. The reality can be the exact opposite: more training and more time may be necessary to unlearn outdated practices and overcome resistance. In addition to training, the arrival of a rewritten application is likely to reveal previously little-known uses such as local integrations, reports relying on legacy data formats, many of which may need to be updated or recreated to allow the business to continue as usual.

- Overly optimistic estimates. IT estimates always tend to be optimistic. But when rewriting an application, there’s an over-arching risk to assume that since it’s already been done, it will be much easier. As the above points show, the opposite may be true. In addition, since the business case for rewriting an application is often not that strong, the stakeholders behind the project may tend to focus on the best-case scenario.

The sum of risks easily leads to delays and cost-overruns, which invariably increase the pressure on the project and lead to cutting corners, creating a vicious circle. If this spins out of the control, the project may be abandoned altogether, but even if not, it may be off to a bad start loaded with technical debt and on the path to prematurely become another troubled legacy application.

It is therefore not surprising that Gartner advises CIOs to look at alternatives to rewriting applications. “Application modernization is not one ‘thing.’ If you’re faced with a legacy challenge, the best approach depends on the problem you’re trying to solve,” says Gartner research director, Stefan Van Der Zijden. “Replacement isn’t the only option.”

Incremental IT Modernization – The Spectrum of Options

The alternative to rewriting an application is taking an incremental approach where parts of an application are improved or replaced in a controlled manner. The specific steps will obviously depend both on the objectives and available means, but also by the existing architecture and the technologies and components used. It will often make sense to employ several options, although not necessarily in a single transformation step.

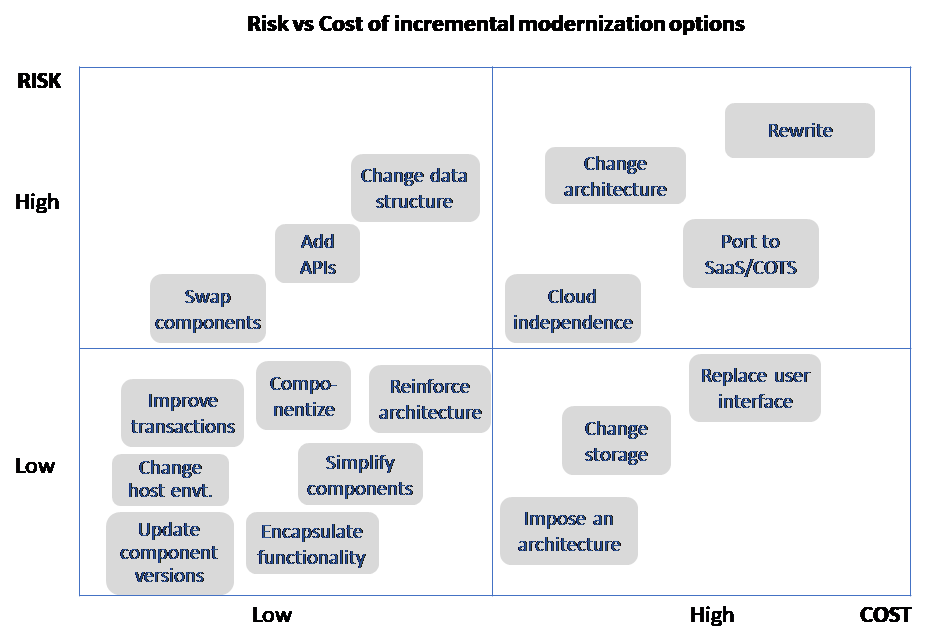

Before we go into the details, the following diagram gives an overview of the risk and cost of the different incremental options. Note that the specific risk and cost will of course depend on the context.

Going through them in rough order of complexity:

- Update component versions. Keeping frameworks, libraries or open source components up-to-date should be common practice. In reality, it is often missed, exposing applications to security and reliability risks. If many components need to be updated significantly at the same time, the impact on existing code, and the risk of regressions, may reach a level where an actual modernization project is required.

- Change host environment. Many applications can be lifted directly to another platform, typically the cloud. In many cases, even a lift-and-shift approach may include some minimal adaptation to the new environment. CAST has developed tools that will assess the readiness of .NET and Java applications for running in the cloud, with both positive or negative results, often to the surprise of those familiar with the application. For strategic or business critical applications it is very likely that the lift-and-shift step will be followed by a deeper adaption to of its architecture to the cloud environment.

- Open up access via APIs. For example, add service APIs to enable broader access to existing proven business functionality that was previously locked up inside a legacy application.

- Simplify complex components. Frequently changed core modules may have degraded over time, reaching a level of complexity or technical debt where the only realistic option is to simplify them, typically requiring them to be completely rework.

- Encapsulate. An application may not have the architecture and the layers necessary to enable other required changes. In that case, a first relatively simple step is to introduce internal encapsulation APIs to understand and clarify how the encapsulated code is used. For example, if an application uses inline SQL queries freely, there is no simple way of knowing exactly how they interact with the database. By replacing the queries with high-level functional calls, the use becomes visible and makes it much simpler to move to another type of database. Such encapsulation also tends to clean up duplicate and unmaintainable queries spread across the code base. Encapsulation can also be used to avoid creating direct dependencies on proprietary cloud components and reduce future porting costs. However, experience shows that it can be difficult for developers to respect such encapsulation, so it will likely either require strong discipline or tool-supported enforcement.

- Componentize functionality. Valuable parts of monolithic applications may be extracted as separate components to make them available as libraries for other applications or to enable scalability for example by running them as microservices.

- Reinforce the original architecture. The original, intended, architecture will often have degraded over time, due to quick fixes, lack of knowledge, or lack of reinforcement. Such nonconformities are dangerous because they are difficult to detect but may still severely impact almost any critical aspect of an application including security, data access and data consistency, as well as performance and stability. At CAST, when we help customers with architecture audits, they are often surprised to see just how often the code does not comply with the required architecture.

- Improve problematic transactions. The demand for modernization often comes problems in the most used or most critical user transactions. A frequently used screen which is very slow to update, or an interaction with lots of manual input which may crash and lose data, can be enough to put an application on the modernization short list. But often an investigation of such issues from a cross-functional, transaction, perspective, may identify solutions which can be not be found simply by investing components or layers individually.

- Swap components. Well-defined components can be replaced with better or more standardized components, for example for legacy components which are no longer maintained, for inhouse components which may duplicate other inhouse components, or to replace inhouse components with standard ones. Since new components are rarely drop-in replacements, they can have a significant impact on the code using the previous component.

- Change storage. Change the storage and persistency mechanism can range from replacing archaic flat files by a database, substitute old RDMS with newer products, replace costly commercial databases with open source, or move to new type of databases like NoSQL for example to improve support for big data.

- Change cloud type. After a first simple step of lifting an application into the cloud, it will typically make sense to continue with a deeper cloud adaptation to fully benefit from the available cloud environment. This will mean moving fully to an IaaS (Infrastructure as a Service) environment and later continue the adaptation further adapt to a specific PaaS (Platform as a Service).

- Cloud independence. Integrating closely with a cloud vendor’s environment will create a lock in and reduce negotiation leverage. In theory, such a lock in could have been avoided when the application was first ported, but often organizations initially lack the experience or technology to do so. As the consequences of a lock in materialize (sometimes combined with a realization that smaller cloud providers may close business) it increasingly makes sense to rework cloud applications to make it possible, and fast and painless, to move to other cloud providers.

- Change data structure. The original data structure and models may need to be revisited, either as the consequence of technology changes, to improve performance, or for example to better separate data for security reasons. Since changes to data may ripple through an application and can have unexpected consequences, it is important to be able to trace the impact across an application to get a full picture in terms of scope and cost.

- Impose an architecture. While most enterprise applications will at least have an intended architecture, it is quite common that small, local or less strategic applications have evolved over time with no particular focus on structure or architecture. While some may be beyond salvation, others can be brought back on track by defining a reasonable architecture and gradually implementing it, over time making the application easier to enhance and less error prone.

- Replace user interface. Adding a modern and more intuitive user interface without changing the business logic and the backend may help extend the life of a legacy application. This may simply be to update the look and feel or to enable web access. The application architecture will determine how easy this is: with a clean ‘View-based’ architecture like MVC it can be straightforward, but if the user interface and the business logic are interweaved it may be highly complex or simply not infeasible.

- Change the architecture. Sometimes the original architecture is simply not fit for new types of requirements and it must evolve, for example by splitting up components or by introducing specific layers or new components. Long overdue improvements may never have been made because the existing architecture made it too complex so updating it can have a positive ‘ketchup’ effect. The need to support or better integrate with an enterprise architecture or to use new microservices will also require fairly deep adaptations of the architecture. It is interesting to note that the more needed the architecture changes are, the more likely they are to be tough to make, simply because easy changes would already have been done long ago.

- Port to SaaS or COTS. When new commercial SaaS (Software as a Service) or COTS (Commercial Off-The-Shelf) solutions have emerged since a legacy application was created it can make sense to replace the legacy with such solutions to reduce maintenance and probably also add long due industry-standard features. For this type of modernization, it will be important to identify and port the company specific code, as well as adapt the standard SaaS/COTS features to support company-specific needs.

- Rewrite the application. As discussed above, rewriting is not an incremental option, but is kept in this list for comparison.

Build Efficient IT Modernization Capabilities

With increasing investments in legacy modernization, and high expectations to quick and visible results, companies need to build solid competences in the range of different modernization approaches, as well as the practices and tools that will enable their teams to quickly and efficiently execute such projects. Projects which often turn out to be way more challenging than originally anticipated.

It is interesting to note that already more than a decade ago, Bill Murphy, research director at Forrester, anticipated modernization capabilities would become a strategic priority for CIOs, stating: “Legacy modernization is morphing into a strategic function. IT can’t afford to toss away reliable application transactions indiscriminately.”

SHARE